|

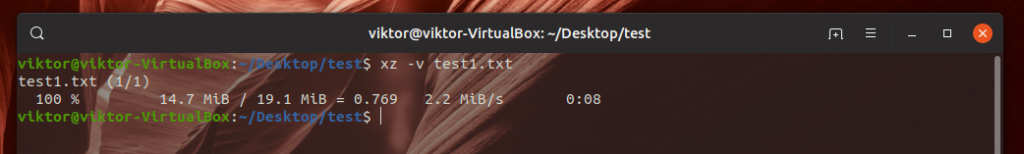

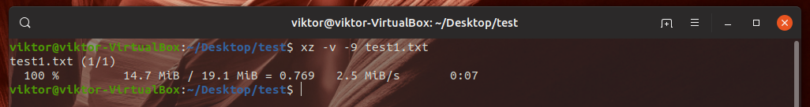

And it is specially true for lzip and xz, the difference between one minute and five is significant. This is true for bzip, there is a huge difference between 10 seconds and one minute.All the standard binaries GNU/Linux distributions give you as a default for all the commonly used compression algorithms are extremely slow compared to the parallel implementations that are available but not defaults.If a non-zero number is specified, zstd uses that many cores.Ī few minor points should be apparent from above numbers: 19 gives the best possible compression and -T0 utilizes all cores. Parallel PXZ 4.999.9beta using its best possible compression. Standard xz will only use one core (at 100%). plzip process used 5.1 GiB RAM at maximum. Plzip 1.8 (Parallel lzip), default level (-6). Standard lzip will only use one core (at 100%). Uses 1 core, and there does not appear to be any multi-threaded variant. Really fast but the resulting archive is barely compressed. pbzip2 process used about 900 MiB RAM at maximum. Standard bzip2 will only use one core (at 100%) Tar itself is an archiving tool, you do not need to compress the archives.

The exact number will vary depending on your CPU, number of cores and SSD/HDD speed but the relative performance differences will be somewhat similar. Ruling out cache impact was done by running sync echo 3 > /proc/sys/vm/drop_caches between runs. The following results are what you can expect in terms of relative performance when using tar to compress the Linux kernel with tar c -algo -f linux-5.8.1.tar.algo linux-5.8.1/ (or tar cfX linux-5.8.1.tar.algo linux-5.8.1/ or tar c -I"programname -options" -f linux-5.8.1.tar.algo linux-5.8.1/) We could test on slower systems if anyone cares, but that seems unlikely given that only 3 people a month read this article and those three people use Windows, macOS and an Android phone respectively. The differences between bzip2 and pbzip2 and xz and pxz will be much smaller on a dual-core. Note: These tests were done using a Ryzen 2600 with Samsung SSDs in RAID1. As you will see below: There is a huge difference between using the standard bzip2 binary most (all?) distributions use by default and parallel pbzip2 which can into multi-core computing. Speed will depend widely on what binary you use for the compression algorithm you pick. You may have to pick just one of the two. So which should you use? It depends on the level of compression you want and speed you desire. Also note that you will have to have c or x before and -f after -I when you use -I. The above arguments will only work if you actually have plzip and pigz installed. tar accepts -I to invoke any third party compression utility.

These are not your only options, there's more. Creating a compressed file with tar is typically done by running tar create f and a compression algorithms flag followed by files and/or directories. Its name is short for tape archiver, which is why every tar command you will use ever has to include the f flag to tell it that you will be working on files and not an ancient tape device (note that modern tape devices do exist for server back up purposes, but you will still need the f flag for them because they're now regular block devices in /dev). Most file archiving and compression on GNU/Linux and BSD is done with the tar utility.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed